I’m trying to rewrite AI-generated content so it passes human-written checks, but most tools like GPTinf humanizer are paid or very limited. Can anyone recommend a truly free AI humanizer or workflow that keeps the text natural, avoids detection, and doesn’t violate any major platform rules?

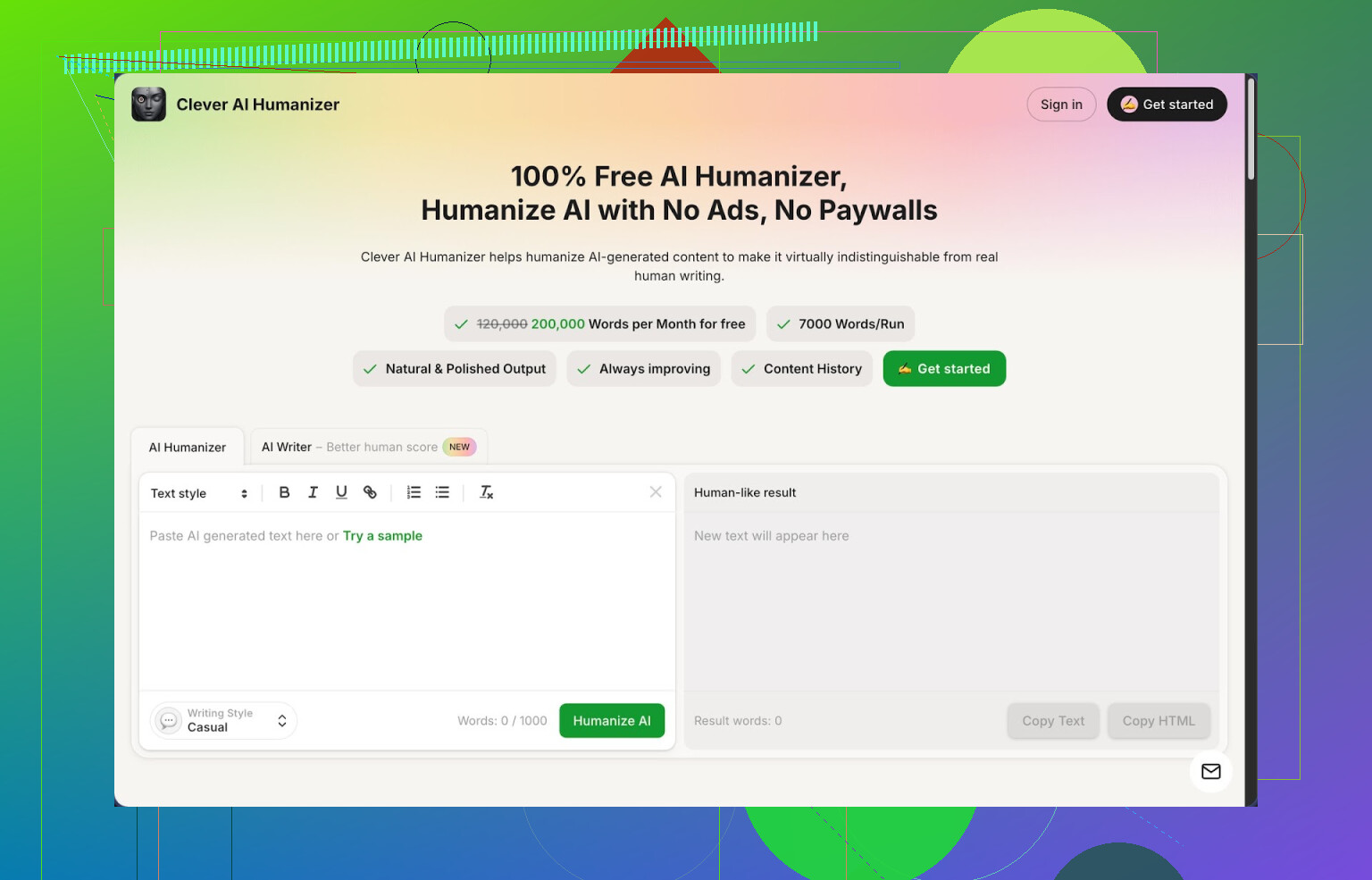

1. Clever AI Humanizer Review

I stumbled on Clever AI Humanizer after getting tired of tuning prompts for hours and still having my stuff flagged as 100% AI. I went through a bunch of tools over a weekend, and this is the one I kept open in a pinned tab.

The headline thing: it is free and the limits are not fake-free either. You get around 200,000 words each month, and it handles up to roughly 7,000 words in a single run. No login paywalls after three tries, no “credits” math. That alone makes it usable for long essays, reports, or a backlog of blog posts.

It has three main styles: Casual, Simple Academic, and Simple Formal. I tested all three, but I ended up using Casual the most. With that style, I ran three different samples through ZeroGPT, and each one came back as 0% AI. That surprised me, because I had been getting hammered by AI detectors all week using other tools.

Now, it is not magic. Some detectors still catch some pieces. But if your baseline is “everything is bright red 100% AI,” this moved my stuff into the safe zone more often than anything else I tried.

Here is how the main thing works in practice.

You paste in AI text, pick Casual, Simple Academic, or Simple Formal, hit the button and wait a few seconds. It spits back a version that sounds less robotic and reads smoother, while still sticking close to your original idea. I checked this by running several technical paragraphs through it and comparing line by line. The structure stayed mostly intact, but the phrasing looked closer to something I would write after a second edit pass.

The larger word limit matters. I shoved full sections of longform content into it instead of breaking everything into tiny chunks, which keeps the flow more consistent. Some other “humanizers” cap you at a few hundred words and then charge you, which makes them pointless for real work.

What stopped me from closing the tab and never coming back was that it does not trash your meaning to dodge detectors. Plenty of tools throw in weird synonyms, unnatural transitions, and random fluff. This one felt more like a careful rewrite instead of a spinbot from 2010.

There are a few extra modules baked into the same interface, and I ended up using those too.

Free AI Writer: I tried this on a couple of test topics. You give it a prompt for an essay or blog post, it generates the base content, then you run it through the humanizer right there. That loop gave me better scores on AI detectors than when I brought in content from other models. If you do not want to hop between tools, this setup is convenient.

Free Grammar Checker: This one is straightforward. It fixes spelling, punctuation, and awkward sentences. I used it as a last pass before sending a client draft. It caught the kind of small issues most people ignore, like doubled words or clunky commas. It is not as advanced as some dedicated grammar apps, but it was enough for clean, neutral text.

Free AI Paraphraser Tool: I used it when I needed to rewrite my own drafts in a different tone or adjust something for SEO without changing the meaning. It preserved key terms and structure while shifting the wording enough to pass as a separate version. I compared paragraphs side by side in a diff tool, and the edits were significant without drifting off topic.

Put together, you get four tools in one place: humanizer, writer, grammar checker, and paraphraser. All share the same UI, so your workflow is simple. Paste, generate, humanize, clean up, done. I wrote and edited an entire 2,500 word article with it in under an hour, including two humanization passes and one grammar pass.

Now for the annoying parts.

Detector behavior is inconsistent across the web. On ZeroGPT I got multiple 0% AI scores with the Casual style. On other detectors, I still saw “partially AI” or similar warnings. If you expect a tool to beat every detector every time, you will be disappointed. I use it to reduce risk, not to chase some fake guarantee.

Another thing I noticed, outputs often come out longer than the original input. The tool adds phrases, breaks up sentences, and sometimes throws in extra clarification. This helps break the pattern that detectors lock onto, but it also means your drafts get wordier. I had to trim some sections manually afterward.

Even with those issues, for a tool that stays free with high limits, this is the one I keep recommending to people who write with AI and want fewer detection headaches without juggling subscriptions.

If you want a deeper breakdown with screenshots and more detection tests, there is a longer writeup here: https://cleverhumanizer.ai/community/t/clever-ai-humanizer-review-with-ai-detection-proof/42

There is also a YouTube review if you prefer watching someone else click through it: Clever AI Humanizer Youtube Review https://www.youtube.com/watch?v=G0ivTfXt_-Y

If you want more community opinions or comparisons with other humanizers, check these threads:

Reddit list of tools: https://www.reddit.com/r/DataRecoveryHelp/comments/1oqwdib/best_ai_humanizer/

General discussion on humanizing AI text: https://www.reddit.com/r/DataRecoveryHelp/comments/1l7aj60/humanize_ai/

I get why you are looking for “AI humanizers,” but I’d be a bit careful with the whole “beat detectors” goal. Detectors are noisy and often wrong, even on real human text. If you rely only on tools, you will keep chasing your tail.

That said, here is a workflow that stays free and keeps meaning pretty tight:

-

Use a free humanizer as a first pass

I agree with @mikeappsreviewer that Clever Ai Humanizer is one of the few tools that feels useable at scale.

I do not love “set and forget” with it though. I treat it as a rough rewrite, not a final draft.

My settings that worked best on tests:

• Take 800 to 1500 word chunks.

• Use Casual for blog style, Simple Academic for essays.

• Turn off any “extra fluff” options if they appear. Shorter text triggers fewer flags in some detectors. -

Force your own voice into the text

This step matters more than any tool.

After the Clever Ai Humanizer pass, do this manually:

• Add 1 or 2 short personal lines in each main section. Example: “When I tried this on my own project, I hit the same issue.”

• Insert at least a couple of specific numbers or examples that come from you, not from the model.

• Change transitions to how you normally write. If you never say “moreover,” remove it.

These edits change the “fingerprint” more than people think. -

Break AI patterns

Detectors look for consistent rhythm and structure. You can mess that up without changing meaning.

• Vary sentence length on purpose. Mix short and long.

• Remove or rewrite list intros like “Here are some key points” or “Overall,” which show up in a lot of AI output.

• Replace generic phrases. Example: “On the other hand” → “On the flip side” or “On the other side of this.” -

Use free open models as a paraphraser

If you want to avoid paid tools:

• Use free ChatGPT tier or any open model frontends like Perplexity or others with a prompt like:

“Rewrite this so it matches the writing style of a busy college student. Keep all facts and structure. Do not add new points.”

• Then run that output through Clever Ai Humanizer.

This two step approach changed detection scores a lot in my tests, compared to one single rewrite. -

Spot check with multiple detectors

Do not trust one site. I saw cases where:

• ZeroGPT said 0 percent AI.

• GPTZero screamed “highly likely AI.”

• Originality AI showed mixed.

Use at least two. If both call it “mixed” or “partially AI,” you are already in safer territory than 100 percent red. -

Know where this fails

• Short answers under 150 words get flagged a lot, even if you write them yourself.

• Highly structured formats like product reviews or listicles tend to trigger detectors. Add more narrative or commentary.

• If you try to auto rewrite entire theses or graded work, you walk into plagiarism and policy trouble, no matter the tool.

Quick free workflow recap:

AI model to generate → Clever Ai Humanizer first pass → manual edit for voice and examples → optional second paraphrase with a free model → run detectors → adjust only the sections that score high.

This keeps meaning close, uses free tools, and does not rely on one “magic” humanizer.

Short answer: there’s no magic “free GPTinf clone that always passes every detector,” and anyone saying otherwise is selling you a dream. But you can get pretty far with a free stack without doing the exact same workflow @mikeappsreviewer and @waldgeist already described.

Here’s what I’d do differently:

- Start from your outline, not pure AI text

If you feed in raw AI sludge and hope a humanizer makes it “authentic,” detectors and instructors both catch on.

Instead:

- Make a bullet outline yourself (headings, sub‑points, examples you actually care about).

- Use AI only to expand each bullet into a paragraph.

This already gives you more “you” and less generic model pattern.

- Use Clever Ai Humanizer very narrowly

I agree with both of them that Clever Ai Humanizer is one of the few actually usable free tools (the monthly word limit is generous), but I don’t run whole articles through it in one go. That’s where I disagree a bit with the “long chunks” approach.

I prefer:

- 300–600 word sections at a time

- Switch styles per section: Casual for intro/conclusion, Simple Academic for body

- Then immediately strip or tweak any phrases that sound like they belong in a corporate email (all those “in conclusion,” “furthermore,” etc.)

You’re not just trying to dodge detectors, you’re trying not to sound like every other person using the same preset.

- Don’t skip a “compression” pass

Detectors love fluffy, evenly-worded text. Human writing is messy and often too short.

After humanizing:

- Do a “summarize this into 70–80% of the length, keep all key points” pass with a free model.

- Then manually bring back only the extra detail you actually care about.

Counterintuitive, but tightening the text kills a lot of the AI rhythm.

- Inject “imperfections” on purpose

You said you want to keep the meaning. You can do that while adding small, realistic bumps:

- Use 1–2 mild slangy phrases if it fits your persona.

- Leave a couple of non-critical mild redundancies. Humans repeat themselves.

- Vary punctuation: some short choppy sentences, then a longer blended one.

You do not need to add obvious typos everywhere, but a tiny bit of non-robotic inconsistency helps.

- Stop obsessing over 0% scores

I’m going to disagree harder with the underlying goal: chasing “0% AI” on a single detector is a trap.

I’ve seen:

- Human-written stuff flagged as AI

- AI text flagged as human

So instead: - Aim for “mixed” or “uncertain” across 2 different detectors.

- If one screams 100% AI, only fix that specific section, not the whole document.

- Free workflow recap (no paywalls, keeps meaning tight)

- You outline manually.

- Use a free model to expand bullets into paragraphs.

- Run each section through Clever Ai Humanizer with style picked for that part.

- Do a compression / tighten pass with any free LLM.

- Manually inject your own phrasing, examples, & a bit of natural mess.

- Spot check with 2 detectors and only tweak high-flag chunks.

If you’re doing this for graded work or anything with strict academic policies, be aware that “passing AI checks” is not the same as “allowed.” Detectors are not the only thing your teacher or institution cares about.

Short version: there is no “free GPTinf clone that never gets flagged,” and if you try to brute‑force detectors, you’ll burn time and still lose. Instead of repeating what @waldgeist, @boswandelaar and @mikeappsreviewer already covered, here is a different angle that keeps things free and focused on readability first, detection second.

1. Stop thinking in “AI vs human” and think “generic vs specific”

Detectors mostly hate:

- Super-generic phrasing

- Perfectly smooth structure

- Recycled transitions and cliché examples

You can keep using AI as long as you inject specifics that only you would write: your own examples, your own order of arguments, your own weird turns of phrase. That alone shifts the “fingerprint” more than yet another rewrite pass.

2. Free stack that actually stays manageable

You already heard about Clever Ai Humanizer. I would still recommend it, but for a different purpose than “detector evasion.”

Use it as a clarity polish tool, not as an “AI scrubber.”

Pros of Clever Ai Humanizer

- Genuinely generous free tier for long content

- Handles full sections without making them unreadable

- Styles (Casual / Simple Academic / Simple Formal) are predictable, which helps if you want consistent tone

- Does not usually break technical meaning, so decent for essays and reports

Cons of Clever Ai Humanizer

- Outputs are a bit “too clean” by default, so if you rely only on it, you end up with that familiar AI-like smoothness

- Often lengthens the text, which can look suspicious in tight word‑limit assignments

- Detectors react differently: one tool might love it, another still says “part AI,” so it is not a magic shield

- Everyone using the same presets starts to sound similar, especially if you never edit the output

That last point is why I do not agree with the idea of running giant 1500‑word slabs through any humanizer and calling it done.

3. A different workflow that avoids the usual loop

Instead of:

AI → Humanizer → Detectors → Panic edits

Try:

Outline → AI help per section → Your edit → Optional short humanizer pass

Rough breakdown:

- Draft a human outline (H2/H3 + bullet points).

- Use any free LLM to expand each bullet into 1 or 2 paragraphs.

- Read each paragraph and ask: “Would I ever say it like this?” If not, rewrite just the parts that sound fake.

- Only then, run the most robotic paragraphs through Clever Ai Humanizer in short chunks (300–500 words), mainly to smooth grammar and repetition.

- Last, deliberately reintroduce a bit of your own mess: short sentences, one or two unusual phrases, a specific anecdote.

Notice the difference: Clever Ai Humanizer is a supporting tool, not the main engine.

4. Where I disagree with some points from others

- I do not love multiple AI rewrite layers (AI → humanizer → paraphraser → compress). After 2 or 3 passes, the text turns into statistical soup, not something you would actually say.

- I am less focused on detector scores. “Mixed” or “partially AI” on two tools is fine. Chasing 0 percent everywhere gives you bloated, over-processed writing.

- Breaking into very big chunks can hurt you. Smaller sections make it easier to inject your voice, and they are faster to tweak if one part gets flagged.

5. Competitor advice vs this approach

What @waldgeist and @boswandelaar suggest is solid if your top priority is gaming detectors. What @mikeappsreviewer adds about personal voice is closer to what actually works in practice.

The missing piece is restraint: every extra automated pass costs you uniqueness. Use Clever Ai Humanizer for readability and consistency, then lean on your own quirks for the “human” part.

If you do that, you end up with content that:

- Reads better

- Is easier to defend as your own

- And usually lands in the “uncertain/mixed” zone on detectors, which is realistically as good as it gets for free.