I recently submitted an important paper and got flagged by an AI plagiarism checker, but I wrote everything myself. I’m worried about false positives and my grade. Has this happened to anyone else? How reliable are these tools, and what should I do to clear things up?

Man, AI plagiarism checkers can really be a crapshoot sometimes. You’d think the tech would be spot-on by now, but nope—false positives are way more common than people realize. A lot of these checkers, especially the newer ones, don’t fully get how to distinguish between stuff that’s “original” and common knowledge, or recognize phrases that just sound similar to thousands of things already written on the internet. They’re way too sensitive sometimes, and just flag anything their algorithm remotely recognizes.

Happened to me once with a paper I sweated over for days, and outta nowhere, I get pinged for “similarity” because I used a few textbook phrases. Turns out, the checker’s database had bits from the very textbook we’re all reading for the class. Super frustrating.

In reality, these tools are just aids—they’re not perfect judges. Context and intent totally matter, but algorithms aren’t “smart” enough (yet) to understand that nuance. If you genuinely wrote your work, don’t panic if you get flagged. Most profs know these tools aren’t infallible, and usually, you can clarify what’s going on.

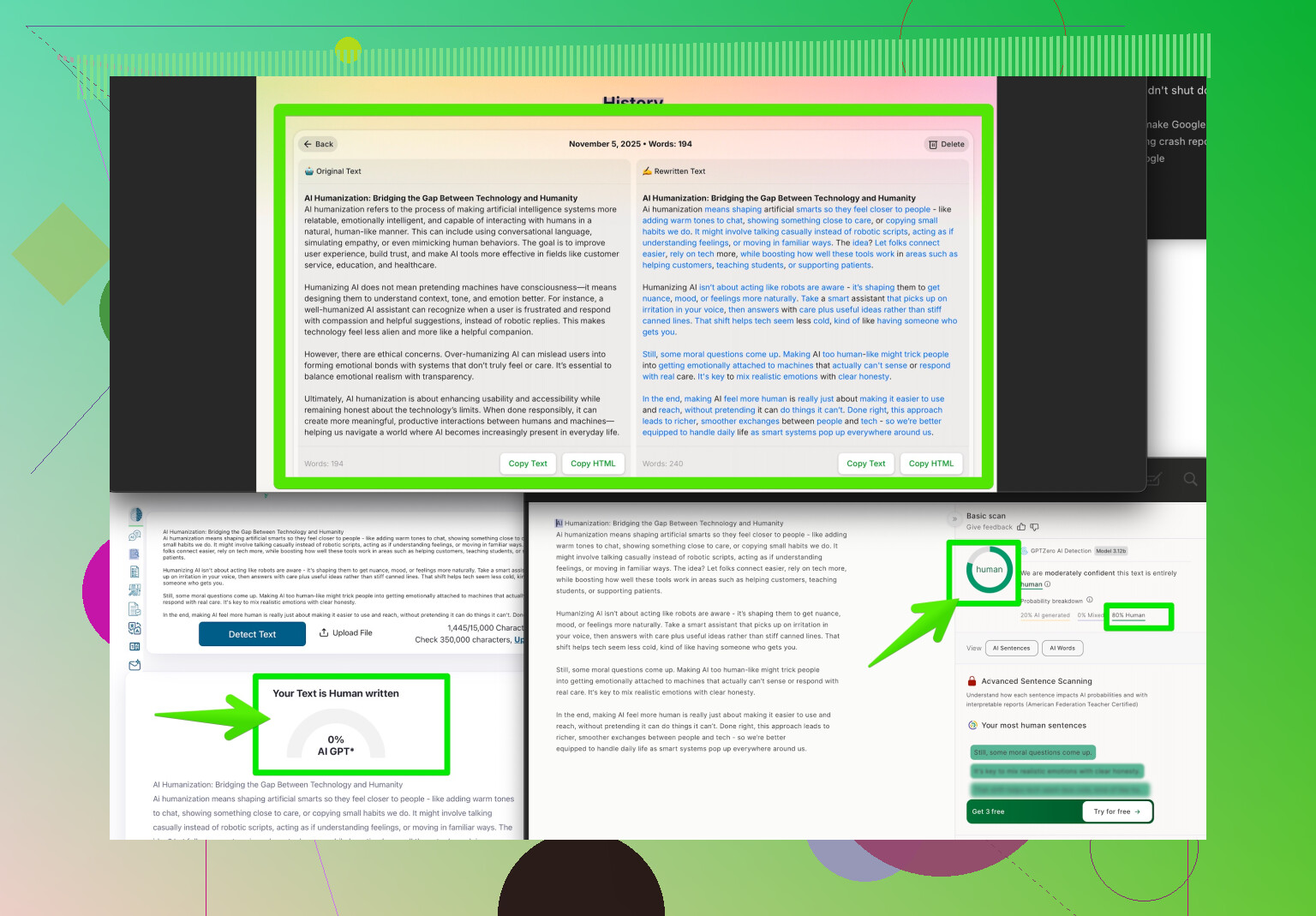

By the way, if you’re worried about this happening again, people are using stuff like “humanizer” tools to make the text look less “AI-written” and less likely to trigger false alarms. One I’ve seen recommended is called the Clever AI Humanizer. Supposedly, it tweaks your writing just enough to avoid those annoying false positives but keeps your meaning intact. If you want to cover your bases, check out how it works right here: make your writing pass plagiarism and AI detection. Can’t hurt.

But yeah, don’t beat yourself up—AI checkers are good as a first pass but definitely not the final word. Always read the flagged report over, and talk to your professor if it makes no sense. Most have seen enough of these false alarms to take them with a grain of salt.

Honestly, AI plagiarism checkers are a classic “good in theory, questionable in practice” situation. They’re not terrible—if you grab chunks of text off Wikipedia or a published article, they’ll spot it. But when it comes to identifying the difference between common knowledge, widely used academic phrases, and actual copying, they’re pretty hit-or-miss. Not shocked at all you got flagged even though you wrote everything yourself. I’ve seen that more than once: get flagged for using phrases like “in conclusion, further research is needed,” which every student on the planet uses.

Gotta partially disagree with @viajeroceleste, though—these “humanizer” tools are sort of a band-aid, not a cure. Tweaking wording just to dodge robotic detectors feels like putting duct tape on the problem; the core issue is that these AI checkers lack true contextual understanding. Sure, Clever AI Humanizer and the like can help you not get unfairly flagged, but I’m more of a fan of transparency: always have a conversation with your prof if something looks off in the report.

Real talk: professors and admins usually know these checkers aren’t infallible. Print out (or screenshot) the report, highlight what you think is unfair, and point out your sources or explain your logic, especially if you’re using standard terminology or cited material. They’d honestly much rather clear up a false positive than accuse someone unfairly.

As for reliability, I’d say AI plagiarism checkers are somewhere between a metal detector at a beach and a dog sniffing for snacks—occasionally useful, mostly overzealous, sometimes miss the real culprit entirely. Don’t let it rattle you. They’re a tool, not a judge and jury, and the human element still matters WAY more.

If you’re looking for more practical insight into how people deal with this mess and get around AI detectors, check out this helpful rundown here: Reddit user experiences on humanizing your writing for AI checkers. Lots of real stories, none of that sales pitchy vibe.

So yeah, keep your receipts, push back if the machine gets it wrong, and don’t sweat it too hard—this happens a LOT more than schools like to admit.

Let’s not sugarcoat it: AI plagiarism checkers are like that one overzealous hall monitor—eager, sometimes helpful, but often just flagging half the school for running in the hallway. What’s wild is how much variation there is between checkers. Some are OK with standard phrases, others lose their electronic minds if you dare say “in summary.”

@boswandelaar nailed it about common knowledge getting wrongly tagged, and @viajeroceleste brought up how humanizer tools might help, but let’s poke at that for a sec. The Clever AI Humanizer is making noise because it tweaks your text enough so algorithms chill out on the alarms. Pros? It’s quick, keeps your original meaning, and (honestly, this matters) can take pressure off when everything feels risky. Cons: It’s not magic—the fixes are just surface-level. Sometimes it’ll rephrase your straightforward sentence into something that sounds just kinda… off, ya know? Plus, you’re adding a step instead of tackling the root problem: machines struggle with nuance and don’t get academic conventions the way people do.

Neither competitor mentioned the fact that what you actually wrote sometimes gets lost when you start humanizing to game the bots. If your program expects dry, formal, academic tone, and your “humanized” draft comes out breezy, professors might get suspicious about style shifts even if the content’s original. There’s also the question of cost if you’re using premium versions of these tools.

Honestly, my go-to is to save drafts, cite like your future job depends on it, and treat AI reports as “suggestions” rather than gospel. If you do use Clever AI Humanizer, do a quick read-through to make sure your voice stays yours—no checker will ever care as much about your grade as you do. And negotiate, don’t panic; ultimately, talking to a real human (like your prof) clears up almost everything these robots bungle. Don’t let detection drama make you second-guess legit originality.