I’m thinking about using Walter Writes AI to help draft parts of a research paper, but I’m worried about plagiarism, detection by AI checkers, and whether professors consider this tool acceptable. Has anyone used it for college essays or academic work, and did you run into problems with originality, citations, or academic integrity policies? I need advice before I risk submitting anything written with it.

Walter Writes AI Review: Honestly One of the Weakest ‘AI Humanizers’ I’ve Tried

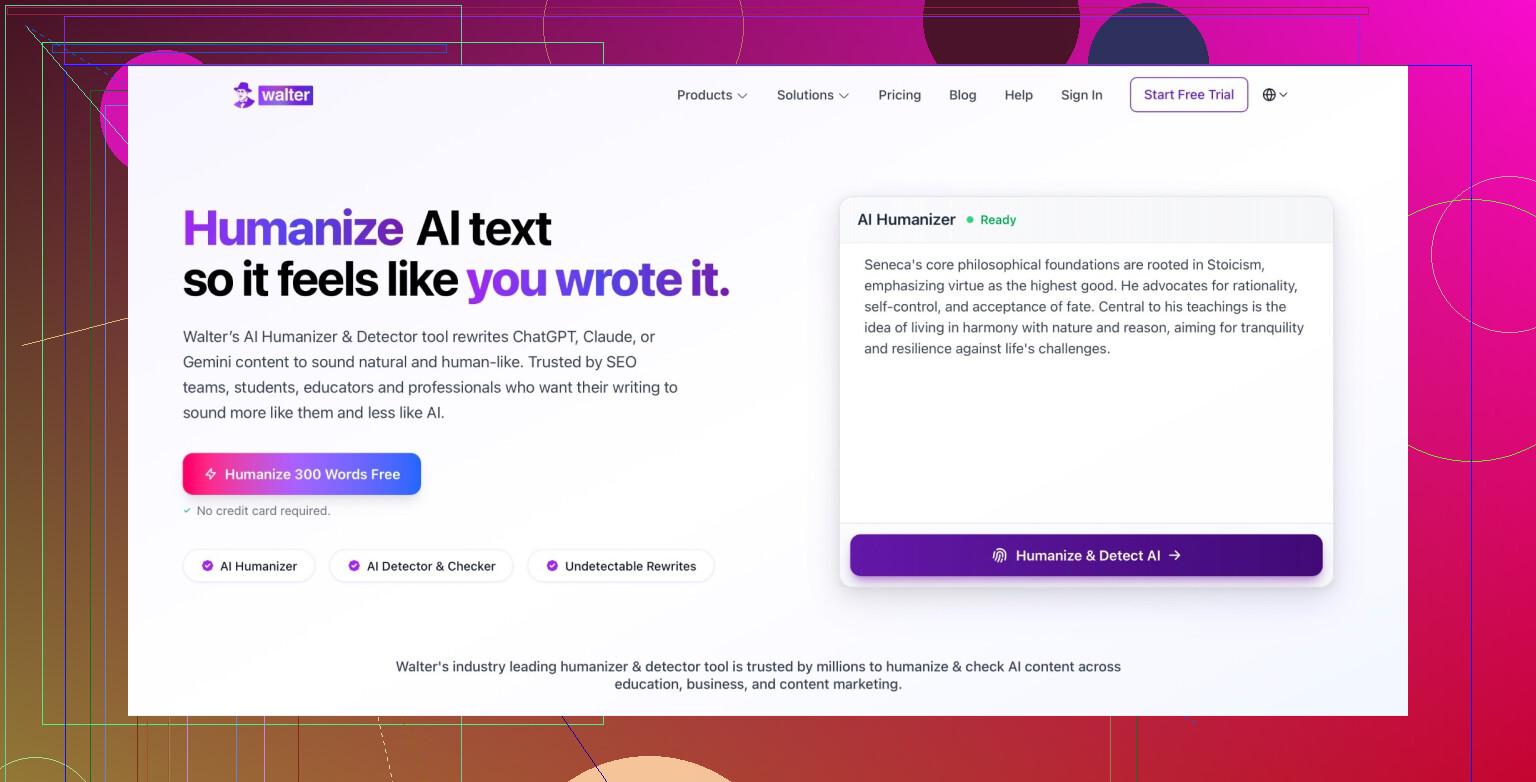

What Walter Writes AI Claims To Be

Walter Writes AI markets itself as this “premium” AI humanizer / essay generator that can slip past all the usual AI detectors. If you’ve searched stuff like “bypass AI detector” or “undetectable essay writer,” you’ve probably seen their ads already.

It is clearly targeted at students: polished landing page, big promises, lots of “we can beat AI detection” language. The pitch is basically:

- Paste your AI text

- Click a button

- Get something that supposedly looks 100% human to every detector

In reality, that’s not what I got at all.

The tool feels like one of those apps that spends more on ads than on actually making the product work. Under the hood, it’s just a mediocre rewriter that doesn’t hold up against actual detectors, especially when you compare it to tools like Clever AI Humanizer that are free and perform better.

Pricing & Value: Where It Really Falls Apart

Let me just say this straight: Walter Writes AI is not cheap for what it does.

The moment you land on the site and start trying to use it, you get funneled toward a paid plan. No real “try it properly first” experience, just “pay up” energy.

Here is how it stacks up against Clever AI Humanizer:

- Walter Writes AI

- Paid monthly subscription

- Tight word caps

- Feels like there are gotchas if you forget to cancel or upgrade

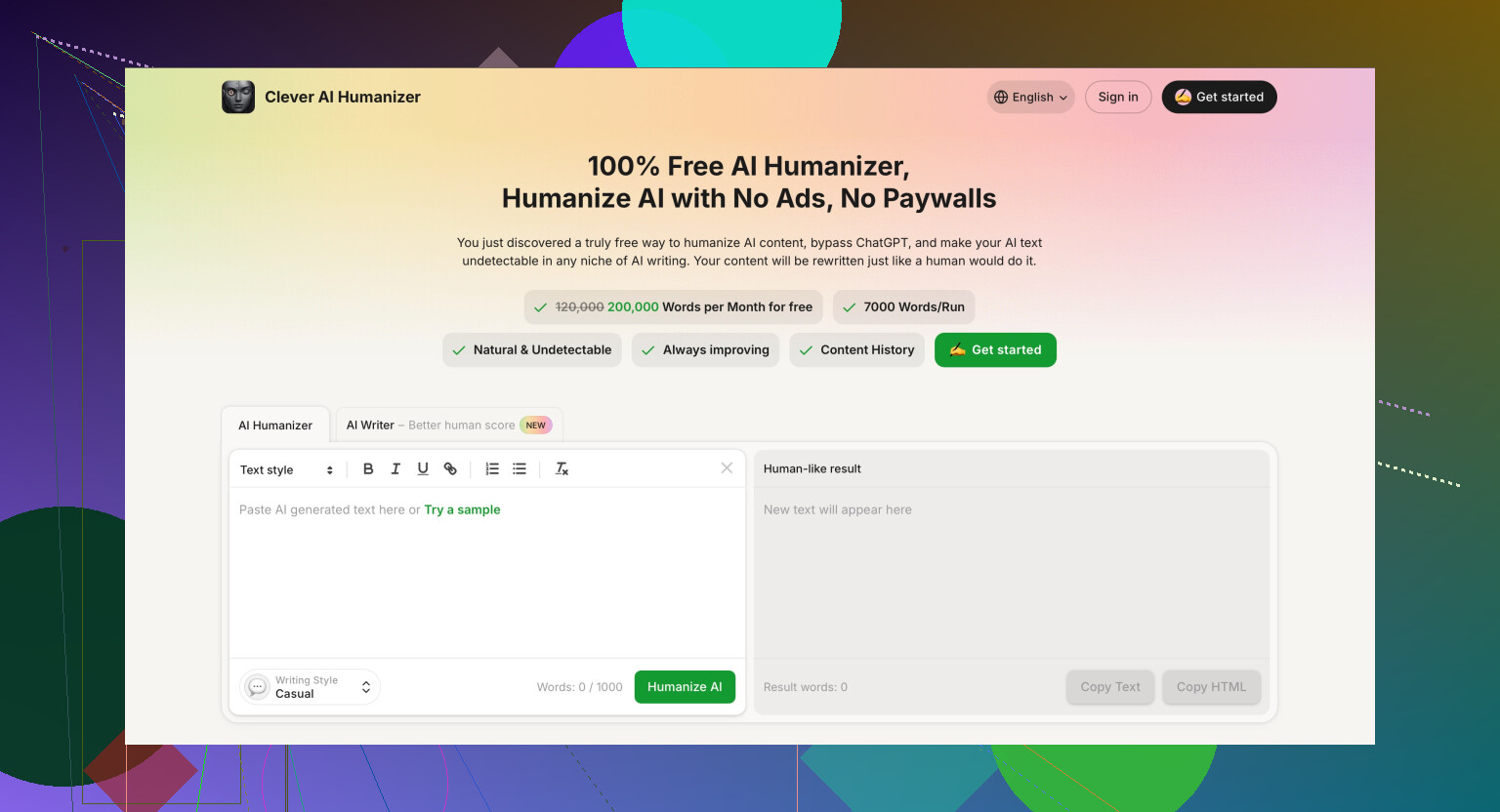

- Clever AI Humanizer

- 100% free

- Around 200,000 words per month

- Up to 7,000 words per run

So the math is simple: Walter wants you to pay to be limited, while there are tools out there that give you more capacity and better performance for free. I honestly don’t see where the “value” is supposed to be.

Why would anyone pay to be throttled when an alternative literally lets you push thousands of words per run at no cost?

Performance Test: How It Did Against Detectors

I ran a pretty basic test:

- Wrote a standard essay using ChatGPT

- Confirmed it registered as 100% AI-generated on detectors

- Ran that same essay through Walter Writes AI

- Then ran a separate copy through Clever AI Humanizer

- Checked both outputs with multiple AI detectors

Here is how the results shook out:

| Detector | Walter Writes AI Result | Clever AI Humanizer Result |

|---|---|---|

| GPTZero | ||

| ZeroGPT | ||

| Copyleaks | ||

| Overall | DETECTED | UNDETECTED |

So when Walter says it “bypasses detection,” that did not match what I saw. Detectors still tagged it as AI across the board. On the other hand, the text processed by Clever AI Humanizer passed on all three.

There was nothing subtle here either. The Walter output felt like basic paraphrasing: some synonyms, shuffled sentence structure, the usual quick-fix style. Detectors are already trained on that kind of pattern. They are not fooled by that anymore.

Meanwhile, the Clever AI Humanizer output looked more like something an actual person would write: more varied phrasing, different rhythm, less robotic structure. That is probably why it slid under those detection tools.

If you want to experiment with it yourself, this is the tool I used:

Clever AI Humanizer:

https://aihumanizer.net/

And if you want to see a broader list of AI humanizers people are talking about, there is a whole Reddit thread collecting options and experiences:

Best AI humanizer tools discussion:

https://www.reddit.com/r/DataRecoveryHelp/comments/1oqwdib/best_ai_humanizer/

In my experience, Walter Writes AI feels overpriced, underpowered, and outclassed by tools that don’t even charge you.

Short answer: no, Walter Writes AI is not “safe” for academic papers in the way you’re hoping, and the whole premise is kind of a trap.

A few points, trying to separate the tech from the ethics:

-

On AI detection & Walter specifically

- Tools like Walter are built around a promise that you can “beat AI detectors.” That’s already a giant red flag in an academic context.

- As @mikeappsreviewer showed, it doesn’t even do that job very well: the output still pings as AI on multiple detectors, which matches what I’ve seen from similar “undetectable” apps. It’s mostly shallow paraphrasing.

- Detectors themselves are unreliable and noisy, but relying on a paid tool that can still light them up is honestly the worst of both worlds.

-

Plagiarism & academic integrity

- The plagiarism risk isn’t just about copy–pasting from somewhere; it’s about misrepresentation of authorship. If your university has a policy that counts uncredited AI writing as academic misconduct (many do now), then using Walter to generate or “humanize” full paragraphs and submitting them as your own is a problem, even if it’s technically “original.”

- A lot of these tools also don’t tell you what models or data they’re built on, so you have no idea if they’re remixing text in a way that could be too close to some training content.

-

What professors actually care about

- Most profs are not sitting around testing Walter vs Clever Ai Humanizer vs ChatGPT. They care about:

- Did you do the thinking, reading, and argument building?

- Can you explain and defend what’s in the paper, verbally, with no tools?

- Are you following the course / university AI policy?

- In a lot of syllabi now, “AI text that is not acknowledged or permitted” is treated like ghostwriting. It does not matter whether Walter claims “human text,” or whether GPTZero says it’s AI or not.

- Most profs are not sitting around testing Walter vs Clever Ai Humanizer vs ChatGPT. They care about:

-

Using AI in a way that’s actually safer

If you want to use AI at all for a research paper, the relatively safer uses are:- Brainstorming research questions or angles

- Getting structural suggestions (outline, headings, logical flow)

- Summarizing your own notes

- Rewording sentences that you then heavily edit again

But even here, check your institution’s policy and, if possible, ask your professor directly. Some allow this with disclosure, some ban it outright, some encourage it but want a methods note.

-

On “humanizers” in general

- Tools like Clever Ai Humanizer are technically better at fooling dumb detectors than Walter from what’s been reported, and it sounds like they’re much more generous with word count.

- But: using any “AI humanizer” to pass off AI text as your own work is still the same academic integrity problem. Even if Clever Ai Humanizer gets you through Turnitin’s AI check, that doesn’t magically make it acceptable to your professor. It just makes it harder to catch.

- So if you’re thinking “I’ll use Walter, and if that fails I’ll use Clever Ai Humanizer so nobody can tell” you’re basically just shopping for a better way to do something that could get you in serious trouble if discovered.

-

What I’d actually do in your position

- Use standard AI (ChatGPT, etc.) outside the final drafting process: help with understanding concepts, brainstorming, maybe outline suggestions.

- Write the actual paper yourself, from your own notes and readings.

- If your school allows AI help, add a short statement like: “I used an AI assistant to generate an initial outline and to rephrase some sentences, all content was reviewed and revised by me.” Adjust based on policy.

- Avoid “Walter” type tools that have “bypass detection” as their main selling point. That marketing pitch is exactly what universities are increasingly targeting when they write misconduct policies.

So: Walter Writes AI is not “safe” in the sense of “I can use this to write chunks of a research paper and not worry.” Technically it seems weak at detection evasion, and ethically it puts you right in the blast radius of academic misconduct rules.

If you want AI in your workflow, use it transparently, for support rather than ghostwriting, and stay away from anything whose headline feature is “we’ll help you cheat the detectors.”

Short answer: using Walter Writes AI to write chunks of an academic paper is not “safe,” and the risk is way bigger than the benefit.

Couple angles that haven’t been hit as hard by @mikeappsreviewer and @yozora:

-

Detectors are moving targets

- Tools like Walter are basically chasing last month’s detector behavior.

- Even if it somehow slipped past GPTZero today, your paper might be rechecked in a future case review with updated tools. Universities keep work on file. Retroactive busts happen.

- So “it passed the checker once” is not a real safety net.

-

Uni policies care about intent, not brand names

- Your professor does not care if it was Walter, ChatGPT, or “Bob’s Super Humanizer 3000.”

- If your institution policy says “uncredited AI generated text = misconduct,” then using an AI humanizer to mask that text just makes it worse, because now it looks intentional and deceptive.

- Even if the output isn’t flagged as AI, if they get suspicious and ask you to explain your reasoning, methods, or reproduce parts of the argument and you can’t, that’s enough.

-

Walter’s specific problem

- From what’s been tested already, Walter looks like a glorified paraphraser. That kind of surface-level synonym shuffle is exactly what detectors were trained to notice first.

- So you’re paying for a tool that:

- Exposes you to academic integrity risk

- Still gets tagged as AI a lot of the time

- That’s like buying a fake ID that still says “UNDER 21” on it.

-

On plagiarism specifically

- Even if Walter’s text is technically “original” and shows 0% match in plagiarism checkers, you can still be nailed for:

- Misrepresentation of authorship

- Not citing AI assistance when required

- Plagiarism in academia is not only about copying sentences; it is also about who actually authored the work.

- Even if Walter’s text is technically “original” and shows 0% match in plagiarism checkers, you can still be nailed for:

-

What’s relatively safer if you still want AI in your workflow

- Use AI for:

- Clarifying concepts from the literature

- Idea generation, brainstorms, alternative framings

- Getting draft outlines or possible structures

- Then write from scratch using your own words and notes.

- If your uni allows it, disclose the use briefly in a footnote or methods / acknowledgements section.

- Use AI for:

-

About Clever Ai Humanizer

- If you’re just curious from a tech perspective and want to play with AI detection, Clever Ai Humanizer seems to outperform Walter at fooling basic detectors and gives you more words.

- That said, using any “AI humanizer” (Clever Ai Humanizer included) to secretly generate your paper is still the same academic integrity problem. It might be more effective technically, but it doesn’t become more acceptable just because detectors are fooled.

-

What most profs actually notice

- Sudden jump in writing quality or style between assignments.

- Highly polished, generic prose that doesn’t match how you talk in class or write on exams.

- Inconsistent argumentation: clean sentences built on shallow or incorrect understanding.

- When they see that, they don’t need an AI checker to start asking questions.

If your goal is “I want help but I don’t want to get burned,” Walter Writes AI is the worst place to sit: marketed for cheating, weak at its main promise, and completely misaligned with how universities are writing their policies now.

If your goal is actually to improve your writing and still sleep at night, use a regular AI assistant for support, stay within your school’s rules, and keep every word of the final draft under your own control.