I’ve been considering using WriteHuman AI to help improve my writing, but I’ve seen mixed opinions online and it’s hard to tell what’s real. Can anyone share a genuine WriteHuman AI review, including how accurate, helpful, and safe it is, and whether the pricing and features are actually worth it for regular users like me?

WriteHuman AI review from someone who paid for it so you do not have to

I tried WriteHuman because their site leans hard on claims about beating GPTZero. My tests did not match the marketing at all.

What I tested

Source text

I used one AI generated article, then ran it through WriteHuman three separate times with slightly different tone options. So same base content, different runs.

Detectors

I checked the outputs on:

- GPTZero

- ZeroGPT

Nothing fancy, just copy, paste, results.

How it did on GPTZero

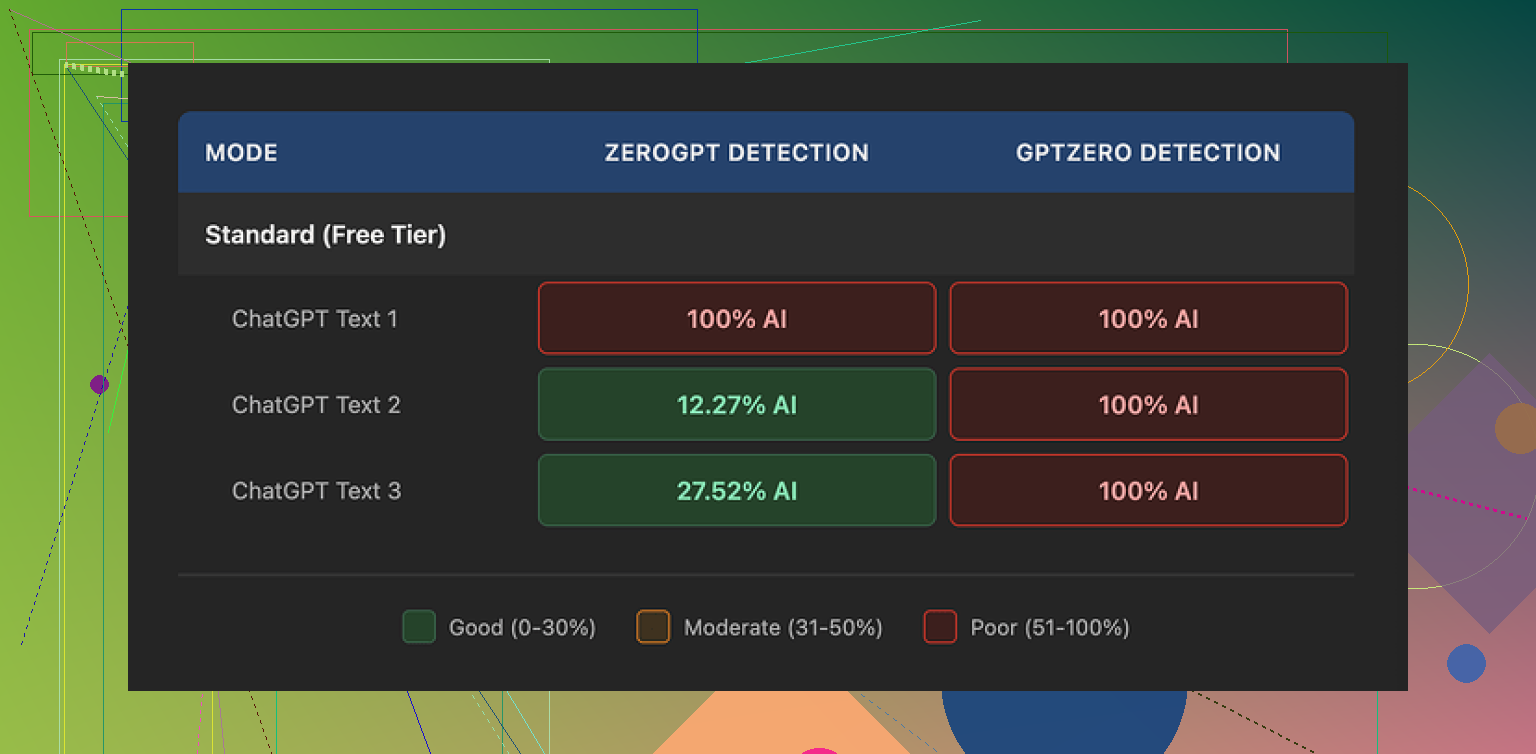

All three WriteHuman outputs came back as 100% AI on GPTZero.

Not “partially human”, not mixed. Full red.

That was with default settings on GPTZero, and I tested the exact same text that WriteHuman had “humanized”.

So for the specific detector they call out in their own marketing, my experience was: complete fail across all three samples.

How it did on ZeroGPT

ZeroGPT gave a more mixed picture:

- First sample: 100% AI

- Second sample: about 12% AI

- Third sample: about 28% AI

So ZeroGPT sometimes thought the text looked more human, but it did not behave in a stable way. Same base article, similar settings, wildly different scores.

If you are hoping for consistent “safe” outputs for long term use, this variation feels risky.

Writing quality and weird behavior

This part surprised me more than the detection results.

WriteHuman’s output was not only different in tone, it swung around in a way that felt off. Within a single article, the “voice” jumped from formal to casual to slightly stiff, as if multiple writers were swapping paragraphs without reading each other.

I also spotted a straight typo in one of the outputs: “shfits” instead of “shifts”.

On one hand, I get what they are doing. Imperfections and tone jumps might trick some detectors because the text looks less machine-smoothed.

On the other hand, if you want to hand this in as homework, publish it on a blog, or send it to a client, that sort of random tone change and typo starts to hurt you. You end up editing heavily anyway.

Here is one of the screenshots from the test:

And another one from the detector results:

Pricing and plans

WriteHuman is not cheap if you are just testing the waters.

- Basic plan: $12 per month (billed annually)

- Requests on Basic: 80 per month

All paid tiers unlock an “Enhanced Model” plus extra tone choices. I did not see a clear technical explanation of what that model does differently beyond the marketing labels.

Two problems here:

- Their own terms say they do not guarantee detector bypass for any tool.

- They have a strict no refund policy.

So if it fails for your use case, you are stuck. No refund, no credit. You paid to run some text through a filter, got flagged anyway, and that is the end of it.

Data usage and privacy

This part is easy to miss but important.

Buried in their terms is a line that your submitted text is licensed for AI training use.

So once you paste your content into WriteHuman, it is in their training pool. If that bothers you, there is no opt out. Your only real option is to not use the service at all.

That is especially relevant if you work with:

- Client documents

- Drafts under NDA

- Private company materials

- Unpublished research

If you want tight control over where your text ends up, this policy alone makes WriteHuman a non starter.

Comparison with Clever AI Humanizer

I tried another tool around the same time: Clever AI Humanizer, here:

With that one, my experience was different:

- Better scores on detectors overall in my own testing

- No paywall for basic use when I tried it

I am not saying it is perfect or foolproof. Detectors shift, models change, and no tool is magic. I am saying that for the same kind of inputs, Clever AI Humanizer performed better in my hands and did not shove a subscription at me before I saw what it could do.

When WriteHuman might still make sense

If you:

- Already paid

- Do not care much about GPTZero

- Only need small edits and do not mind manual cleanup after

then you might squeeze some value out of it, especially if you like playing with different tones and do not rely on one specific detector.

For most people I talk to that is not the use case. They want:

- Higher odds of passing detectors

- Clean text they can use with minimal edits

- Clear policies on data usage

- A way to test before paying or a refund option

WriteHuman, at least at the time I tested, missed on several of those points.

My takeaway

If your main goal is to dodge GPTZero, my tests say WriteHuman falls short. Three runs, three times flagged as 100% AI.

If you care about consistent writing quality, you will spend time fixing tone shifts and errors.

If you worry about data privacy or lack of refunds, their terms are not in your favor.

If you are price sensitive or want to experiment first, tools like Clever AI Humanizer felt safer to try, with better detection results in practice and no upfront paywall when I used it.

I used WriteHuman for about a week on paid credits to clean up blog posts and emails, so here is a straight, no-fluff take that lines up with some of what @mikeappsreviewer said, but from a different angle.

- Accuracy and AI detection

I tested it on:

- One 1,200 word blog post from GPT‑4

- Two shorter emails

Detectors I tried:

- GPTZero

- ZeroGPT

- Copyleaks

Results were mixed.

GPTZero

- Long post: still flagged as 100% AI every time

- Emails: sometimes dropped to “mixed,” never “likely human”

ZeroGPT

- Long post: scores bounced around between ~20% and ~80% AI on separate runs

- Emails: one went down to “human” range, the other stayed flagged

Copyleaks

- Slight improvement, but still “AI content” most of the time

If your goal is “I need this to look human to detectors consistently” you will not get that. It behaves more like a randomizer than a reliable mask.

- Helpfulness for writing quality

For improving your writing, I’d rate it as “okay but not worth the price.”

What it did well:

- Added some varied sentence structure

- Inserted small human-like fillers and imperfections

- Sometimes improved clarity in short paragraphs

What hurt:

- Tone jumped around inside the same piece

- Some odd word choices that felt like someone using a thesaurus too much

- I had to edit most outputs for:

- Tone consistency

- Typos

- Repetitive phrasing

If your writing is weak and you need a quick first pass, it helps a bit. If you write decently and care about style, you will spend time fixing what it outputs.

- Speed and usability

- Web app was stable and fast for me.

- Interface is simple. You paste, pick tone, get output.

- No advanced controls for structure, formatting, or strict style rules.

So from a workflow angle, it does not save much time once you factor in cleanup.

- Privacy and terms

This is the biggest red flag for me.

Their terms give them a license on your input for AI training. No opt out when I used it.

If you:

- Handle client work

- Deal with any confidential docs

- Write under NDA

I would not run that text through WriteHuman at all. The mix of no refund and training rights on your content is a bad combo.

- Pricing vs value

Pricing felt high for what you get, especially with:

- No guarantee on detectors

- No refund policy

- Inconsistent outputs

If you experiment a lot, you burn through the quota fast. For casual writers or students, the price does not make much sense.

- How I use tools now

My current setup:

- I write a rough draft with an AI model.

- I manually rewrite key sections in my own voice.

- I run it through a “humanizer” only as a last step when I know detectors are strict.

For that last step, I got better, more stable results with Clever AI Humanizer than with WriteHuman. It is not magic, but for me:

- Text felt more consistent in tone.

- Detector scores were more predictable.

- I did not get forced into a subscription right away.

Again, not perfect, but if you are comparing the two, Clever AI Humanizer is worth trying before you spend money on WriteHuman.

- When WriteHuman makes sense

I think it only fits you if:

- You already accept AI detection risk.

- You care a bit about “messing up” AI text so it looks less polished.

- You do not mind editing your final draft.

- You do not work with sensitive content.

If your top priorities are:

- Passing GPTZero-type checks

- Strong, consistent writing

- Clear data control

- Ability to test more before paying

Then I would look at alternatives like Clever AI Humanizer or even a manual rewrite workflow instead of locking into WriteHuman.

I used WriteHuman for a couple weeks alongside the same kind of tools @mikeappsreviewer and @byteguru mentioned, but came away with a slightly different angle.

For writing help specifically, I’d say WriteHuman is… mediocre but not useless.

Where I disagree a bit with them:

If your draft is already your own writing (not AI) and you just want light smoothing, it’s okay. It can:

- Break up long, clunky sentences

- Add a bit of variation so everything doesn’t sound like a robot lawyer

- Occasionally clarify a messy paragraph

In that scenario, I actually liked some outputs and kept maybe 50–60% of what it suggested. It’s not a full rewrite tool, more like a jittery proofreader that had too much coffee.

Where I agree completely:

-

As an AI detection bypass tool, it’s unreliable. In my runs, GPTZero still nailed it on anything that started as AI. ZeroGPT was all over the place, same as they described. If your main goal is “don’t get flagged,” this is not a dependable solution.

-

Tone consistency is rough. Within one article it can swing from casual to weirdly formal. If you care about a stable voice, you’ll be patching it up a lot. At that point you might as well just rewrite it yourself.

-

The no‑refund policy plus “we can train on your data” terms are a big nope if you touch client work, essays tied to your identity, or anything private. That combo makes it hard to justify for serious use.

Pricing vs value: For a student or casual blogger, the subscription is hard to justify given the cleanup you still have to do and the risk of detectors still flagging it. For a business writer, the data usage terms are more of a blocker than the price, honestly.

What I ended up doing:

- I now treat “humanizers” as last mile noise injectors, not magic cloaks.

- I write / or co-write with an AI, then manually rewrite key parts in my own voice.

- Only at the very end I’ll run a detection-sensitive piece through something like Clever AI Humanizer instead of WriteHuman when I really need to soften the AI footprint. In my case, Clever AI Humanizer gave more stable tone and somewhat more predictable detector scores without locking me into a paywall immediately.

So, is WriteHuman “worth it” for improving writing?

- If you want a gentle, imperfect rephrasing tool, don’t care about data reuse, and are fine editing after: maybe

- If you care about AI detectors, privacy, or polished, consistent style: there are better workflows and tools, and Clever AI Humanizer slotted into my stack much more naturally.

TL;DR: I wouldn’t buy WriteHuman specifically for detection or as my main writing improver. At best it’s a niche helper that you still have to babysit.